Generative AI represents a significant breakthrough in the field of artificial intelligence, where ChatGPT has emerged as a beacon of innovation, captivating minds and sparking a wave of unparalleled creativity. This ground-breaking technology, with its ability to replicate human dialogue and decision-making, marks a significant milestone in the widespread adoption of artificial intelligence (AI).

At the core of this transformative power lie the remarkable advancements in foundation models. These large models, comprised of billions of parameters, serve as the foundation for constructing specialized models that excel in image and language generation. Among these models, the Large Language Models (LLMs) not only falls within the realm of generative AI but also play a pivotal role as a foundational building block.

The LLMs driving ChatGPT have been successful in unravelling the intricacies of language. They empower machines to acquire knowledge, comprehend context, interpret intent, and autonomously generate innovative outputs, an extraordinary milestone. Furthermore, these models undergo extensive pre-training on vast volumes of data, encompassing text, images, and audio. This preparation allows them to be adapted or fine-tuned for a wide array of tasks, providing unmatched flexibility and possibilities for reuse.

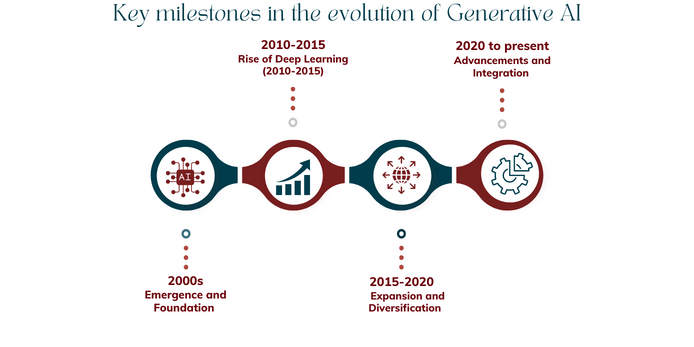

What has brought us to this point?

2000: Machine learning: The phase of analyzing and predicting.

The initial decade of the 2000s witnessed a swift progression in diverse machine learning techniques capable of analyzing vast volumes of online data and extracting valuable conclusions through the process of "learning." Since then, businesses have recognized machine learning as an exceptionally potent domain within AI for data analysis, pattern identification, insight generation, prediction-making, and task automation. These advancements have allowed for unprecedented speed and scalability, surpassing what was previously deemed unattainable.

2010-2015: Deep Learning: The phase of vision and speech

In the 2010s, significant strides were made in the realm of machine learning, specifically in the domain of deep learning. These advancements revolutionized AI's perception capabilities, powering computer vision systems utilized by search engines and self-driving cars. Deep learning techniques enabled the classification and detection of objects, while also facilitating natural voice recognition, enabling popular AI speech assistants to interact with users seamlessly.

2015-2020: Expansion and Diversification

Generative AI expanded into various domains, showcasing its versatility and potential. Prominent advancements include GANs for realistic image synthesis and style transfer. NLP models like LSTM and Transformers excel in text generation and dialogue systems. Creative applications emerged, including artwork generation and virtual character design. Improved training techniques and access to labelled datasets significantly enhanced the quality and performance of generative models.

2020-present: Generative AI: The phase of language mastery

Leveraging the exponential growth in both size and capabilities of deep learning models, the decade of the 2020s will be characterized by a focus on mastering language. OpenAI's creation of the GPT-4 language model signifies the onset of a new era in the capabilities of AI applications based on language. The impact of such models extends extensively to businesses, as language is intricately woven into every aspect of an organization's daily operations encompassing institutional knowledge, communication, and processes. The implications of these advancements are far-reaching and will reshape the way businesses operate.

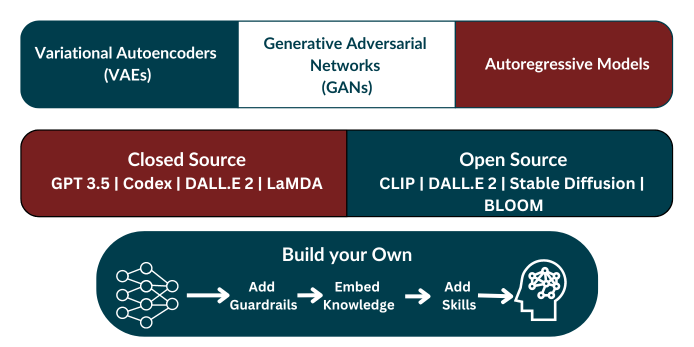

Types of Generative AI models

Generative AI models can be broadly categorized into three main groups

Variational Autoencoders (VAEs)

- VAEs are generative models that learn underlying patterns in data and generate new samples by sampling from a learned latent space.

- They are widely used for tasks like image synthesis, anomaly detection, and data augmentation.

Generative Adversarial Networks (GANs)

- GANs consist of two neural networks: a generator and a discriminator, which compete in a game-like setting.

- The generator aims to produce realistic outputs, while the discriminator tries to distinguish between real and generated samples.

- GANs are used for tasks like image synthesis, style transfer, and creating deepfakes.

Autoregressive Models

- Autoregressive models generate new samples by modeling the probability distribution of each element conditioned on previous elements.

- Popular examples include PixelCNN and WaveNet, which have been successful in generating images and speech.

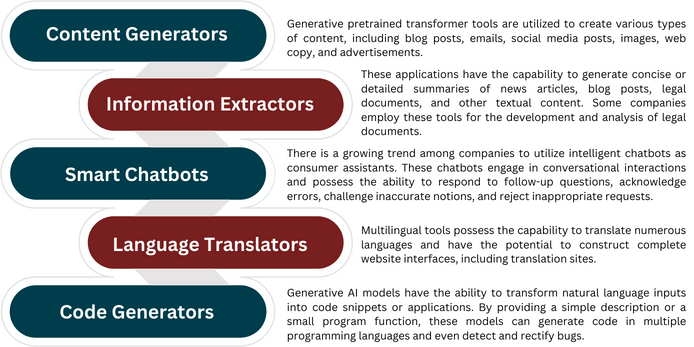

Applications of Generative AI

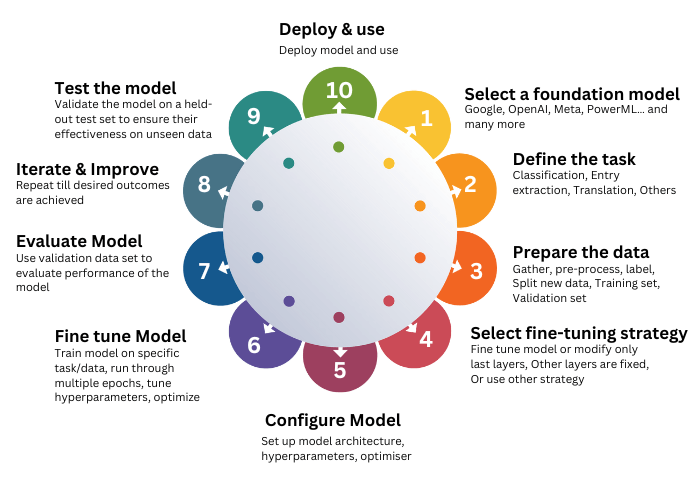

The process for extracting value from Generative AI

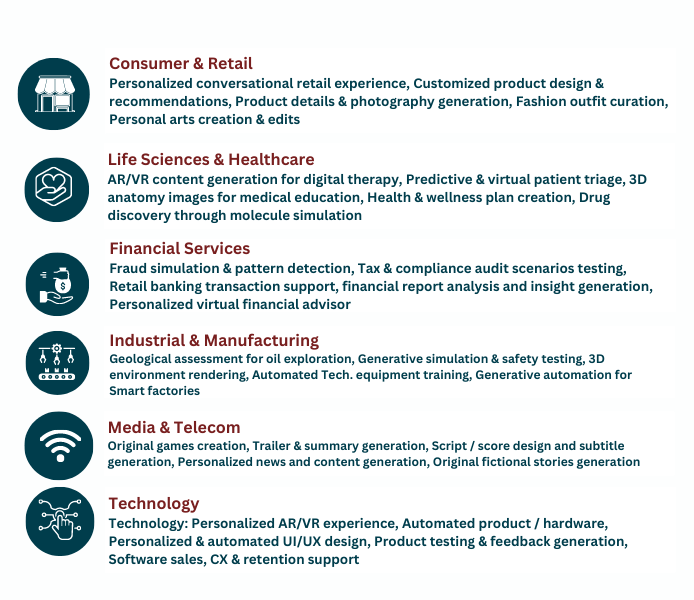

Industry use cases

Critical risks of Generative AI to look before adopting the technology

Copyright Infringement

Training Generative AI models on copyrighted data raises the risk of IP lawsuits, necessitating compliance with copyright laws by model providers and the development of appropriate legal frameworks.

Lack of trust

Generative AI may generate factually incorrect responses that appear highly convincing. To mitigate the risks of using inaccurate information, companies should enforce double-checking of all Generative AI outputs and restrict its usage to non-critical tasks for now.

Biased outputs

Generative AI models trained on biased real-world data can inherit those biases. Mitigation techniques, such as Reinforcement Learning with Human Feedback (RLHF), aim to reduce bias, although it's important to note that this approach is not flawless.

Energy use & environmental harm

Generative AI consumes significant compute energy for training and usage, requiring the adoption of sustainable energy sources to mitigate its environmental impact.

Phishing and fraud

Generative AI facilitates cybercrime by enabling the instant creation of convincing phishing emails and deepfakes. To mitigate this risk, companies should enhance cybersecurity protocols, provide employee training on emerging safety threats, and potentially employ Generative AI for fraud detection.

Shadow AI

To mitigate risks from employee misuse of external generative AI tools, companies should establish clear guidelines and policies that provide adequate guidance and supervision for their use.

Capability overhang

Generative AI's probabilistic nature poses the risk of unexpected capabilities during deployment, such as security vulnerabilities. While challenging to fully mitigate, thorough pre-launch testing can help address this risk.

Proprietary data leak

To mitigate the risk of proprietary data leakage during cloud-based training of Generative AI models, companies can opt for on-premises training instead, although this decision may involve additional tradeoffs.

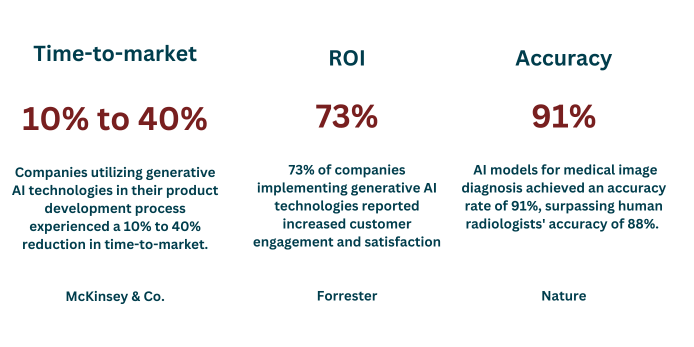

Companies realize the value that generative AI solutions bring by enabling them to drive innovation, streamline processes, enhance productivity, and unlock new opportunities for growth and competitiveness

The way forward: Consume, Customize, or Build your Own

Off the Shelf

Employing paid subscriptions or corporate user plans of Generative AI tools like ChatGPT, Jasper, Notion, etc., to train and test employees without the risk of exposing confidential company data. Use-cases are restricted to generating low-quality and low-risk content.

Customize

Organizations can enhance the capabilities of their applications by integrating them with LLMs. This can be achieved by consuming Generative AI and LLM applications through APIs and customizing them to a certain extent for specific use cases using prompt engineering techniques like prompt tuning and prefix learning.

Build your own Model

By training your own LLM, organizations can establish a significant competitive advantage, as it enables superior LLM performance across a wide range of applications or custom-tailored to their specific industry. This creates a sustainable edge, particularly when coupled with a positive data/feedback loop from LLM deployments

How can Navikenz help

Navikenz provides comprehensive support and expertise to companies, ensuring successful implementation of Generative AI solutions tailored to their unique needs, enabling them to unlock the full potential of this transformative technology.

Partnerships

Exploring Generative AI in Workflow Automation

Our latest video explores the impact of Generative AI on workflow automation. In today's fast-paced business environment, efficiency and innovation are not just goals, they're necessities. We are excited to showcase the solutions we have developed using Generative AI to streamline processes, reduce manual effort, and enhance decision-making in the Life sciences & Healthcare industry.

In this video, get an insider look at how our cutting-edge technology integrates seamlessly into existing workflows, turning complex tasks into automated, straightforward processes. From reducing errors and operational costs to accelerating project timelines, our solutions are designed to give businesses a competitive edge.

Experience the Future of Shopping with the Navikenz RetailBot

Are you tired of traditional chatbots that leave you feeling lost and frustrated while shopping online? Navikenz RetailBot is here to change that, offering a seamless and user-friendly shopping experience.

To see the RetailBot in action and discover how it can simplify your online shopping journey, we invite you to watch our demo on our website. Experience the convenience of browsing through products, making purchases, tracking orders, and even handling returns effortlessly.

Don't miss out on the chance to revolutionize your online shopping experience. Join us in the future of retail today!